Steps to deploy HA MySQL in Amazon EKS using Kubera

Amazon Elastic Kubernetes Service (Amazon EKS) is a fully managed Kubernetes service. EKS runs the Kubernetes service across multiple AWS Availability Zones, automatically detects and replaces unhealthy control plane nodes, and provides on-demand, zero downtime upgrades, and patching.

MayaData’s OpenEBS is a leading Open Source Container Attached Storage, built using cloud native architecture, simplifies running stateful applications on Kubernetes.

-1.png?width=524&name=Untitled%20design%20(11)-1.png) In this example, we will walk through the steps of deploying a highly available MySQL database on AWS EKS using Kubera.

In this example, we will walk through the steps of deploying a highly available MySQL database on AWS EKS using Kubera.

To run MySQL on Amazon EKS using Kubera, you need to :

- Install AWS three-node EKS cluster using AWS official documentation

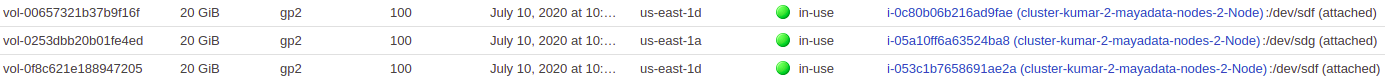

- Attach additional EBS volumes of 20GB on each EC2 instance

- Install Kubera and OpenEBS using a complete user guide for Kubera

- Create a storage class using Kubera documentation

- Create a PVC for MySQL deployment on Kubernetes

- MySQL deployment using Kubernetes

- Test high availability and fault tolerance by failing node, deleting pod

- Test backup and restore capability by restoring the mysqldump

How to set up an Amazon EKS Cluster:

Please follow the instructions mentioned at Amazon docs AWS EKS Setup to configure the Amazon EKS cluster.

Once done we should have a three node EKS cluster up and running.

$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

ip-192-168-26-249.ec2.internal Ready none 38h v1.16.8-eks-fd1ea7

ip-192-168-27-150.ec2.internal Ready none 38h v1.16.8-eks-fd1ea7

ip-192-168-49-2.ec2.internal Ready none 38h v1.16.8-eks-fd1ea7Attach additional EBS volumes of 20GB on each EC2 instance running on EKS

Connecting your Amazon EKS cluster to Kubera:

Connect your EKS cluster to Kubera and deploy OpenEBS on it. Click here to get detailed steps.

Once done, we should be able to see pod running:

$ kubectl get pods -n maya-system

NAME READY STATUS RESTARTS AGE

cluster-register-f8qwg 0/1 Completed 0 24h

cortex-agent-84967c7949-xxqm4 1/1 Running 0 24h

cstorpoolauto-0 1/1 Running 0 24h

dmaas-agent-57987f79f-vld58 1/1 Running 0 24h

dmaas-operator-66d9855d89-2s2jn 1/1 Running 0 24h

fluentd-aggregator-849bd5b9c9-dgfg9 1/1 Running 0 24h

fluentd-forwarder-79kvf 1/1 Running 0 24h

fluentd-forwarder-f7tln 1/1 Running 0 24h

fluentd-forwarder-lkgzq 1/1 Running 0 24h

kube-state-metrics-6cf6cd55-wbsnp 2/2 Running 0 24h

maya-io-agent-7j2xr 1/1 Running 0 24h

maya-io-agent-7mjxx 1/1 Running 0 24h

maya-io-agent-w6hsc 1/1 Running 0 24h

openebs-manager-5c6995cfb9-4xqgw 1/1 Running 0 24h

openebs-upgrade-59c9b4c58d-gfnkz 1/1 Running 0 24h

pv-exporter-9jdmp 2/2 Running 0 24h

pv-exporter-p7jfk 2/2 Running 0 24h

pv-exporter-xb5v4 2/2 Running 0 24h

restic-cpcvk 1/1 Running 0 24h

restic-kcdh5 1/1 Running 0 24h

restic-pgt8b 1/1 Running 0 24h

status-agent-547c7498b9-pnr2n 1/1 Running 0 24h

upgrade-controller-599c5c5bff-4h4n4 1/1 Running 0 24h

velero-7dcd4b554-d8z8k 1/1 Running 0 24h

weave-scope-agent-mjn27 1/1 Running 0 24h

weave-scope-agent-t9hsk 1/1 Running 0 24h

weave-scope-agent-w8jcj 1/1 Running 0 24h

weave-scope-app-84d8cb45fd-zrdc2 1/1 Running 0 24h

weave-scope-cluster-agent-5b7d45b49-b6tkp 1/1 Running 0 24h$ kubectl get pods -n openebs

NAME READY STATUS RESTARTS AGE

cspc-operator-9cd76bdc9-9vpgn 1/1 Running 0 24h

cstorpool-kfjmd-cpsn-7999c4474c-8l7dr 3/3 Running 0 24h

cstorpool-kfjmd-f2fx-65d9555fb7-cl85l 3/3 Running 0 24h

cstorpool-kfjmd-rn92-554b45486-sr6wr 3/3 Running 0 24h

cvc-operator-5b5ff44969-ghvv5 1/1 Running 0 24h

maya-apiserver-58f494f7b4-jrtwg 1/1 Running 0 24h

openebs-admission-server-6f8976bb99-rwggn 1/1 Running 0 24h

openebs-cstor-admission-server-5f5548647b-kn5mg 1/1 Running 0 24h

openebs-localpv-provisioner-c99575f76-vv96c 1/1 Running 0 24h

openebs-ndm-8fn7k 1/1 Running 0 24h

openebs-ndm-operator-dfd5f688d-54smw 1/1 Running 1 24h

openebs-ndm-w5z99 1/1 Running 0 24h

openebs-ndm-xx795 1/1 Running 0 24h

openebs-provisioner-58cc5ffd95-9t8ws 1/1 Running 0 24h

openebs-snapshot-operator-5f79b8bcb5-wgn2q 2/2 Running 1 24h

pvc-6b530389-e7c6-4ab4-a564-a3e99468e2d7-target-7c67bdf5bdgzphw 3/3 Running 0 24h

Create a storage class for MySQL in Kubernetes:

The storage class object defines the replication count to be set for high availability of MySQL and efficient while dynamically configuring the storage volumes. These parameters can be modified as required keeping the stateful applications in mind.

Here, we will start initially with a replication count of 3.

$ cat > storageclass.yaml EOF

kind: StorageClass

apiVersion: storage.k8s.io/v1

metadata:

name: openebs-csi-cstor

provisioner: cstor.csi.openebs.io

allowVolumeExpansion: true

parameters:

cas-type: cstor

replicaCount: "3"

cstorPoolCluster: cstorpool-kfjmd

EOF$ kubectl create -f storageclass.yaml

Storageclass.storage.k8s.io "openebs-csi-cstor" created$kubectl get sc

NAME PROVISIONER AGE

gp2 (default) kubernetes.io/aws-ebs 24h

openebs-csi-cstor cstor.csi.openebs.io 9h

openebs-device openebs.io/local 9h

openebs-hostpath openebs.io/local 9h

openebs-jiva-default openebs.io/provisioner-iscsi 9hCreate a MySQL PVC:

We can create a Persistent Volume Claim (PVC) from the storage class with the enhanced dynamic provisioning.

$ cat > pvc.yaml EOF

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: sql-claim

spec:

accessModes:

- ReadWriteOnce

volumeMode: Filesystem

resources:

requests:

storage: 10Gi

storageClassName: openebs-csi-cstor

EOF

$ kubectl create -f pvc.yaml

persistentvolumeclaim "sql-claim" created

$ kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

sql-claim Bound pvc-6bb8336d-1adf-41f5-a2b0-a8b0189ae767 10Gi RWO openebs-csi-cstor 9h

MySQL deployment using Kubernetes:

Finally, proceed with MySQL pod creation using a deployment in Kubernetes. We will keep the replica count as a single MySQL pod but can be scaled to multiple MySQL pods on multiple nodes depending upon the needs. Kubera provides replication to achieve high availability within all the nodes in the cluster.

$ cat > mysqldepnew.yaml EOF

apiVersion: apps/v1

kind: Deployment

metadata:

name: mysql-test

spec:

replicas: 1

selector:

matchLabels:

app: mysql-test

template:

metadata:

labels:

app: mysql-test

spec:

containers:

- image: mysql:8.0.20

name: mysql

ports:

- containerPort: 3306

env:

- name: MYSQL_ROOT_PASSWORD

valueFrom:

secretKeyRef:

name: mysql-secret

key: password

volumeMounts:

- mountPath: /var/lib/mysql

name: mysqlvol

volumes:

- name: mysqlvol

persistentVolumeClaim:

claimName: sql-claim

EOF

Create a secret for MySQL:

kubectl create secret generic mysql-secret --from-literal=password=abc@123$kubectl create -f mysqldepnew.yaml

deployment.apps/mysql-test created

$kubectl get pods

NAME READY STATUS RESTARTS AGE

mysql-test-64747b7858-hzs4k 1/1 Running 0 11s$ kubectl exec -it mysql-test-64747b7858-hzs4k -- mysql -u root -p

Enter password:

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 8

Server version: 8.0.20 MySQL Community Server - GPL

Copyright (c) 2000, 2020, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql>Once inside the MySQL prompt, let us add some random data:

mysql> create database mayadata;

Query OK, 1 row affected (0.02 sec)

mysql> use mayadata;

Database changed

mysql> create table KUBERA (name varchar(20), city varchar(20), infra varchar(20), vpc varchar(20), storage varchar(20), region varchar(20));

Query OK, 0 rows affected (0.19 sec)

mysql> insert into `KUBERA`(`name`, `city`, `infra`, `vpc`, `storage`, `region`) values ('ABN', 'SAN JOSE', 'AWS', 'YES', '50G', 'US-EAST-1'),('IGL',

'SANTACLARA', 'AZURE', 'YES', '40G', 'DOWN-SOUTH-2'),('LGI', 'KENTUCKY', 'AWS', 'NO', '20G', 'US-WEST-2'),('BOEING', 'BRUSSELS', 'OPENSTACK', 'NO', '80G', 'ASIA-PACIFIC'),('NIFTY', 'SANTACRUZ', 'AWS', 'YES', '67G', 'EU-WEST-1');

Query OK, 5 rows affected (0.54 sec)

Records: 5 Duplicates: 0 Warnings: 0mysql> select * from KUBERA;

+--------+------------+-----------+------+---------+--------------+

| name | city | infra | vpc | storage | region |

+--------+------------+-----------+------+---------+--------------+

| ABN | SAN JOSE | AWS | YES | 50G | US-EAST-1 |

| IGL | SANTACLARA | AZURE | YES | 40G | DOWN-SOUTH-2 |

| LGI | KENTUCKY | AWS | NO | 20G | US-WEST-2 |

| BOEING | BRUSSELS | OPENSTACK | NO | 80G | ASIA-PACIFIC |

| NIFTY | SANTACRUZ | AWS | YES | 67G | EU-WEST-1 |

+--------+------------+-----------+------+---------+--------------+

5 rows in set (0.00 sec)

mysql>Test HA and fault tolerance by failing node, deleting POD:

Performing node failure with pod deletion:

Now, let’s simulate the node failure using cordoning the node and avoiding future placement of PODs on that node. Later we will delete the pod running on it.

$kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

mysql-test-64747b7858-hzs4k 1/1 Running 0 98m 192.168.60.52 ip-192-168-49-2.ec2.internal none none$kubectl cordon ip-192-168-49-2.ec2.internal

node/ip-192-168-49-2.ec2.internal cordoned

$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

ip-192-168-26-249.ec2.internal Ready none 34h v1.16.8-eks-fd1ea7

ip-192-168-27-150.ec2.internal Ready none 34h v1.16.8-eks-fd1ea7

ip-192-168-49-2.ec2.internal Ready,SchedulingDisabled none 34h v1.16.8-eks-fd1ea7

$kubectl delete pod mysql-test-64747b7858-hzs4k

pod "mysql-test-64747b7858-hzs4k" deleted

$ kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

mysql-test-64747b7858-zscsv 0/1 ContainerCreating 0 17s none ip-192-168-26-249.ec2.internal none none

$ kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES mysql-test-64747b7858-zscsv 1/1 Running 0 32s 192.168.16.240 ip-192-168-26-249.ec2.internal none noneVerify if the data is intact:

$kubectl exec -it mysql-test-64747b7858-zscsv -- mysql -u root -p

Enter password:

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 9

Server version: 8.0.20 MySQL Community Server - GPL

Copyright (c) 2000, 2020, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql> use mayadata;

Reading table information for completion of table and column names

You can turn off this feature to get a quicker startup with -A

Database changed

mysql> show tables;

+--------------------+

| Tables_in_mayadata |

+--------------------+

| KUBERA |

+--------------------+

1 row in set (0.00 sec)

mysql> select * from KUBERA;

+--------+------------+-----------+------+---------+--------------+

| name | city | infra | vpc | storage | region |

+--------+------------+-----------+------+---------+--------------+

| ABN | SAN JOSE | AWS | YES | 50G | US-EAST-1 |

| IGL | SANTACLARA | AZURE | YES | 40G | DOWN-SOUTH-2 |

| LGI | KENTUCKY | AWS | NO | 20G | US-WEST-2 |

| BOEING | BRUSSELS | OPENSTACK | NO | 80G | ASIA-PACIFIC |

| NIFTY | SANTACRUZ | AWS | YES | 67G | EU-WEST-1 |

+--------+------------+-----------+------+---------+--------------+So finally, we can observe that the MySQL database is still intact.

Snapshot Feature in Kubera and restore of DataBase:

With the Kubera snapshot feature, we can take snapshots of the applications running and restore them as required.

Create snapshot class pointing to cstor csi driver:

kubectl apply -f https://raw.githubusercontent.com/openebs/cstor-csi/master/deploy/snapshot-class.yamlCreate a snapshot after updating the PVC and snapshot name in the following yaml:

$ cat > csi-snapshot.yaml EOF

apiVersion: snapshot.storage.k8s.io/v1beta1

kind: VolumeSnapshot

metadata:

name: demo-snapshot-new

spec:

volumeSnapshotClassName: csi-cstor-snapshotclass

source:

persistentVolumeClaimName: sql-claim

EOFkubectl apply -f https://raw.githubusercontent.com/openebs/cstor-csi/master/examples/csi-snapshot.yamlVerify that the snapshot has been created successfully:

$ kubectl get volumesnapshots.snapshot

NAME AGE

demo-snapshot-new 27hCreate the volume clone using the above Snapshot by updating and modifying the following yaml:

$ cat clone.yaml

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: pvc-clone-new

spec:

storageClassName: openebs-csi-cstor

dataSource:

name: demo-snapshot-new

kind: VolumeSnapshot

apiGroup: snapshot.storage.k8s.io

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 12GiVerify that the PVC has been successfully created:

$ k get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

mysql-mysql-test-0 Lost pvc-72812989-5ef8-4340-8285-6babc6972e3b 0 openebs-csi-cstor 44h

pvc-clone-new Bound pvc-376bbabf-93a9-496c-bf1e-1231cab5eaef 12Gi RWO openebs-csi-cstor 24h

sql-claim Bound pvc-055f471d-5005-45b6-adcc-58715e263f2f 10Gi RWO openebs-csi-cstor 27hWe will try to create a deployment from the new PVC:

$ cat mysql_clone.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: mysql-snap

spec:

replicas: 1

selector:

matchLabels:

app: mysql-test-new

template:

metadata:

labels:

app: mysql-test-new

spec:

containers:

- image: mysql:8.0.20

name: mysql

ports:

- containerPort: 3306

env:

- name: MYSQL_ROOT_PASSWORD

valueFrom:

secretKeyRef:

name: mysql-secret

key: password

volumeMounts:

- mountPath: /var/lib/mysql

name: mypd

volumes:

- name: mypd

persistentVolumeClaim:

claimName: pvc-clone-new

$ kubectl create -f mysql_clone.yaml

deployment.extensions "mysql-snap" createdVerify the pod created is running:

$ k get pods

NAME READY STATUS RESTARTS AGE

mysql-snap-7656c9c7cd-5xxj8 1/1 Running 0 24h

mysql-test-64747b7858-n2fkn 1/1 Running 0 27hVerify if the data is intact and present:

$ k exec -it mysql-snap-7656c9c7cd-5xxj8 -- mysql -u root -p

Enter password:

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 9

Server version: 8.0.20 MySQL Community Server - GPL

Copyright (c) 2000, 2020, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| mayadata |

| mysql |

| performance_schema |

| sys |

+--------------------+

5 rows in set (0.01 sec)

mysql> use mayadata;

Reading table information for completion of table and column names

You can turn off this feature to get a quicker startup with -A

Database changed

mysql> select * from KUBERA;

+--------+------------+-----------+------+---------+--------------+

| name | city | infra | vpc | storage | region |

+--------+------------+-----------+------+---------+--------------+

| ABN | SAN JOSE | AWS | YES | 50G | US-EAST-1 |

| IGL | SANTACLARA | AZURE | YES | 40G | DOWN-SOUTH-2 |

| LGI | KENTUCKY | AWS | NO | 20G | US-WEST-2 |

| BOEING | BRUSSELS | OPENSTACK | NO | 80G | ASIA-PACIFIC |

| NIFTY | SANTACRUZ | AWS | YES | 67G | EU-WEST-1 |

+--------+------------+-----------+------+---------+--------------+

5 rows in set (0.00 sec)

mysql>Finally, we observe that the data is intact and present.

That's it for today. I hope you find the blog helpful. Please leave your valuable comments or feedback in the comment section below.

Game changer in Container and Storage Paradigm- MayaData gets acquired by DataCore Software

Don Williams

Don Williams

Managing Ephemeral Storage on Kubernetes with OpenEBS

Kiran Mova

Kiran Mova

Understanding Persistent Volumes and PVCs in Kubernetes & OpenEBS

Murat Karslioglu

Murat Karslioglu